This is the Linux app named OpenLLM whose latest release can be downloaded as v0.3.9sourcecode.zip. It can be run online in the free hosting provider OnWorks for workstations.

Download and run online this app named OpenLLM with OnWorks for free.

Follow these instructions in order to run this app:

- 1. Downloaded this application in your PC.

- 2. Enter in our file manager https://www.onworks.net/myfiles.php?username=XXXXX with the username that you want.

- 3. Upload this application in such filemanager.

- 4. Start the OnWorks Linux online or Windows online emulator or MACOS online emulator from this website.

- 5. From the OnWorks Linux OS you have just started, goto our file manager https://www.onworks.net/myfiles.php?username=XXXXX with the username that you want.

- 6. Download the application, install it and run it.

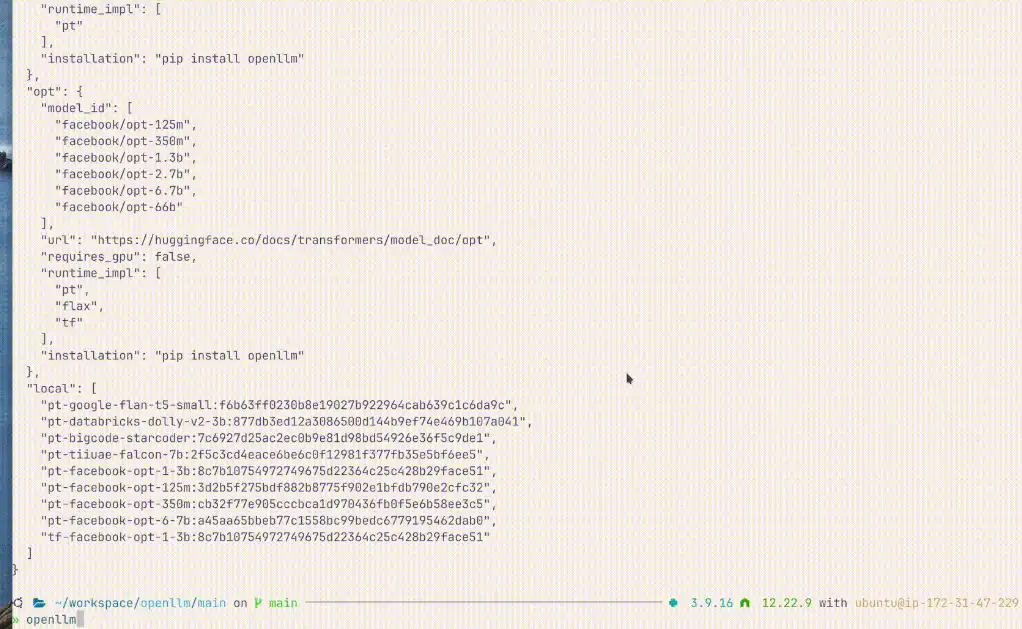

SCREENSHOTS:

OpenLLM

DESCRIPTION:

An open platform for operating large language models (LLMs) in production. Fine-tune, serve, deploy, and monitor any LLMs with ease. With OpenLLM, you can run inference with any open-source large-language models, deploy to the cloud or on-premises, and build powerful AI apps. Built-in supports a wide range of open-source LLMs and model runtime, including Llama 2, StableLM, Falcon, Dolly, Flan-T5, ChatGLM, StarCoder, and more. Serve LLMs over RESTful API or gRPC with one command, query via WebUI, CLI, our Python/Javascript client, or any HTTP client.

Features

- Fine-tune, serve, deploy, and monitor any LLMs with ease

- State-of-the-art LLMs

- Flexible APIs

- Freedom To Build

- Streamline Deployment

- Bring your own LLM

Programming Language

Python

Categories

This is an application that can also be fetched from https://sourceforge.net/projects/openllm.mirror/. It has been hosted in OnWorks in order to be run online in an easiest way from one of our free Operative Systems.